In the early 1980s, the American video game industry entered its second generation and was making money hand over fist. Arcades popped up across the country, the Atari 2600 dominated competitors in the home market, and Pac-Man Fever (no relation to the same-named trope) held America in its iron grip.

But in 1983, something went terribly wrong. Dozens of game manufacturers and console producers went out of business. Production of new games stalled out. The American console market as a whole dried up for two years. And when it returned, the American stalwarts found themselves catching up to the newly dominant Japanese entrants.

This was the Great Video Game Crash of 1983. And here's how it went down.

The Fall of Atari

Any history of the Crash has to address the downfall of Atari, who dominated the American video game scene and whose fortunes were indelibly linked to the crash.

In those early days, Atari owned the rights to build physical cartridges for the Atari 2600. This meant that only Atari could make games for the 2600 - there were no third-party developers. But Atari was stingy with its in-house designers, refusing to give them royalties or authorial credit for their work. This led to a culture of dissent which led a lot of talent to quit the company, many wandering to competing video game manufacturers. Since Atari dominated the home console market back then, most competitors were making Arcade Games; many companies like Williams Electronics poached a lot of talent from Atari. But others went on to start their own companies, of which the most successful was a nascent Activision.

Activision used its founders' knowledge of the Atari system to make games that could be played on the Atari 2600. Atari was having none of that, and in 1980 they sued Activision for stealing trade secrets. But the tide quickly turned against Atari, as the courts decided that Atari could not actually prevent a third-party company from making games compatible with its consoles. Atari was forced to settle in 1982, accepting royalty payments from Activision in exchange for allowing them to become the first ever third-party developer. This decision would reverberate in the electronics world for decades, and it inspired many others to follow suit and get into the video game market. Some would make their own home consoles, like the Intellivision.

While Atari was still the undisputed leader in the home console market, it failed to adapt its strategy for the changing industry landscape. Its strategy amounted to selling consoles as cheaply as possible while relying on game sales to make a profit. (No wonder they were so keen on preventing third-party development.) The strategy worked when Atari had a home-market monopoly on Space Invaders and Asteroids, but when competing companies started producing better products (or cheaper but comparable work), either for the 2600 itself or for the rapidly emerging superior hardware its competitors offered, Atari's profits suffered.

Atari shoved out as many games as it could in late 1982, most notably the home port of Pac-Man and the video game adaptation of E.T. the Extra-Terrestrial. Both games were rushed to market as quickly as possible (E.T. was programmed in less than six weeks), and they quickly earned a reputation as two of the worst games ever made. Atari was banking on these games being system sellers and produced insane numbers of cartridges - after all, how can you lose money making Pac-Man? Indeed, although initial sales were brisk (and record-breaking in Pac-Man's case), once word spread of their poor quality, the sales dried up. A desperate Atari kept churning out games, eventually making more copies of Pac-Man than there were consoles to play them on.note But there were no takers. Atari famously had to deal with this by burying the excess cartridges in a landfill in Alamogordo, New Mexico![]() .note That's millions of dollars' worth of hardware just thrown away.

.note That's millions of dollars' worth of hardware just thrown away.

November 1982 also saw the release of the 2600's successor, the Atari 5200, but it failed to live up to expectations. Not only were the joysticks notoriously finicky and fragile, Atari found out the hard way that successor systems need Backwards Compatibility. The 5200 was not backwards-compatible with the 2600note , whereas competitors like the ColecoVision could play 2600 games. Atari also tried its hand in the PC market with the 1200XL computer, which was an even bigger flop than the 5200, mostly because it was rushed to market and had serious compatibility issues of its own with the earlier 400 and 800 library (and also Atari was sucked into a price war with Commodore).

December 7, 1982 is the closest thing the gaming industry has to a "Black Tuesday": during a shareholder meeting, at which observers predicted Atari would announce a 50% profit increase, Atari announced its projection of just 10-15%. The next day, the stock of Atari's parent company Warner Communications plummeted by 33%. Then came a minor scandal in which it was discovered that Atari president Ray Kassar had sold 5,000 shares in Atari just a half-hour before the announcement, although he insisted the sale was legitimate.note

By 1983, Atari's inferior technology and library had eroded its customer base, and its wildly optimistic production runs had eroded its profit margin. By the end of 1983, Atari racked up nearly half a billion dollars in losses. The company was soon sold off by Warner (along with several other branches, including MTV and its sister channel Nickelodeon) in an attempt to recoup the debt, split up and limped along into the next few decades, a shadow of its former self.

Quantity over quality

If it had just been Atari who was suffering, it can't really be considered a "crash". But its competitors were also having issues of their own.

A glut of companies tried to cash in on Atari's success, galvanized by Activision's entry. This left consumers with a wide range of choices for home consoles: you could get a Bally Astrocade, ColecoVision, Emerson Arcadia 2001, Magnavox Odyssey (or Odyssey²), Intellivision, Vectrex, or Channel F-System IInote . There was also the Video Arcade and the Super Video Arcade sold by Sears under their "Tele-Games" brand, which were actually a rebranded Atari 2600 and Intellivision respectively. As it turned out, that was too many choices. None of these consoles could succeed in such a competitive marketplace; nobody was going to buy more than one console back then, and the market wasn't big enough to fit everybody. Most of these systems were discontinued quite quickly. Atari won against them mostly on the strength of name recognition.

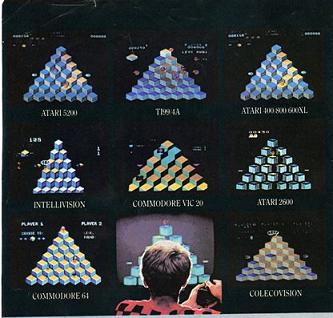

Even the systems that survived struggled to really compete with each other. Atari, Coleco, and Mattel (the makers of the Intellivision) were all making games for each other's consoles. With the limitations of the hardware and the plethora of unbuilt Video Game Tropes, there wasn't much you could do on a home console in the early 1980s. Hence, the image at the top of this page - you had the same game playable on basically every system worth buying, so neither the hardware nor the game library stood out in favor of any one console. It also didn't help that said companies took every chance they got to sabotage each other; Coleco infamously made their version of Donkey Kong a pack-in title with the ColecoVision and deliberately made inferior ports for the Atari 2600 and Intellivision, driving consumers to their own console.

But the bigger problem is that with the games, there was basically no quality control. Nobody was testing whether their games were even playable, not even the giant Atari. And once Activision blew the doors open, anybody could make a cartridge and sell it for cheap. This led to a glut of Shovelware, much of it coming from non-video game companies seeing an opportunity to cash in, thinking that video games would sell regardless of quality. They tended to make mail-in exclusive tie-in games to their corporate properties like The Kool-Aid Man. At least 90% of it was unplayable crap.

Consumers of the time had no way of really knowing what was good and what wasn't. They didn't have the resources we have today; no Internet as we knew it, no video game magazines. All they had were word of mouth and the covers of the games themselves, which happily misrepresented the game therein to get people to buy it. A few stores had demo stations set up for potential customers, but these didn't really allow anyone to play long enough to discover a Game-Breaking Bug, and no stores would warn you about any themselves. The shelves would put the tried and tested titles right next to the Shovelware with no way to distinguish them. This eventually killed enthusiasm for video games, because consumers tended to feel once bitten, twice shy.

Lack of quality control also extended to the content of the games as well. Some games were "pornographic" (well, technically speaking), like the infamous Mystique games. This led to a backlash against Atari for selling "adult" content alongside the garden-variety video games. Because Atari lacked quality control and had no involvement in the games' development, they didn't even know what was being made for their console. Atari had to admit that they were out of the loop, which didn't exactly paint them in a great light.

And retailers were getting burned, too. There were so many video games out there that stores couldn't give them away. They just sat in the bargain bin for $4 a pop. They tried returning the surplus to the game companies, but they weren't interested. Meanwhile, the drastic discounts to get people to buy the shovelware were also depressing the prices of the good stuff, so the old strategy of profiting off the Killer App was made impossible even for companies who made good games.

Console games are just a fad

Although Atari took the country by storm in the early 1980s, there was an equally vocal contingent of people who were convinced video games were a Flash In The Pan Fad. Some of them were Moral Guardians who didn't understand them and thought they were bad for that reason. Others thought they were a waste of time. And they may have had a point, what with all the Shovelware on the consoles. Many games at the time were over in five minutes, or had only a single screen of content, and even the best games of the era rarely broke more than an hour of playtime to see everything they had to offer. The media at the time thought of video games as a novelty, and an expensive one at that (since you had to buy a console and the games and hook everything up). 1983 essentially "proved" to the naysayers that they were right.

Relatedly, some people did see a future in video games, but on PCs rather than home consoles. It was right around this time that the personal computer made its first competitively priced entry into American society. PCs had software libraries that catered to the early gaming crowd, but their educational and office software gave them the edge over home consoles. They could thus be marketed both to gamers and non-gamers. One group they targeted were Education Mamas, who around this time were worried that if their kids weren't computer-literate, they'd be shut out of good colleges and the job market (which, in hindsight, was quite justified). Certain computers, like the Commodore 64, were priced and marketed to compete with the home consoles - and they did this adeptly.

Home computers were also rapidly outstripping home consoles in the field of memory capacity, making it possible for game programmers to write larger programs on floppy disks. Games like Montezuma's Revenge and Fort Apocalypse had to have features cut to fit on 16KB home console cartridges. If you wanted more sophisticated games, and you were willing to shell out some money, you went with a PC rather than a home console. Europe kind of saw a middle ground where floppy drives were too expensive, but PC gamers played arcade-style games on cassettes.

This is why, even as the home game market suffered a huge blow from the death of the home console, the few surviving game companies could write games for the growing PC base, especially the Commodore 64. The rest of the market survived on Arcade Games, which were declining much more slowly because there was still a social aspect to these games — instead of playing at home, you went to a public place and played alongside others, and those arcade houses were willing to make the investment in the hardware. Minor arcade classics like Paperboy, Punch-Out!!, Space Ace, Karate Champ, and Gauntlet saw release during this period, and many of them would end up ported to PCs and home consoles (with varying degrees of success) after that market's revival.

The rest of the world survives

The interesting thing about the Crash is that for all the talk about it, it only happened in North America. Elsewhere in the world, it made little impact. But that's mostly because there wasn't much of a home console market outside North America to begin with.

In Europe, the gaming market was already dominated by 8-bit home microcomputers, predominantly the Commodore 64 and the Sinclair ZX Spectrum. Europe relied on the far cheaper tape-distribution system, which became the backbone of its video game industry for the next decade. The computers were fairly inexpensive, and games were typically priced to be affordable to children and teenagers who could buy them with their own pocket money, resulting in microcomputers becoming extremely popular with European kids as game devices, rather than as the business and education machines their manufacturers initially had in mind. Even when the NES and Master System showed up, it took a lot longer for them to catch on in Europe than they did elsewhere. The micros' affordability and the ease of learning how to code software for them enabled hundreds of amateur coders to single-handedly write and release games for the Speccy, C64, and other micros. These "bedroom coders" were vaunted by European gamers at levels ranging from "cult hero" (e.g. Jeff Minter, Matthew Smith) to "legend" (e.g. Bell and Braben, the Oliver Twins). But that didn't prevent a number of talented developers from making enough stupid decisions to snatch defeat from the jaws of victory (e.g. the saga of Imagine Software![]() ). Even then, the UK was hit by a smaller-scale hardware crash in 1984, causing the less popular machines like the Dragon 32 and Jupiter Ace to disappear entirely and bigger companies Sinclair and Acorn to be taken over by Amstrad and Olivetti respectively.

). Even then, the UK was hit by a smaller-scale hardware crash in 1984, causing the less popular machines like the Dragon 32 and Jupiter Ace to disappear entirely and bigger companies Sinclair and Acorn to be taken over by Amstrad and Olivetti respectively.

Japan had a massive arcade base thanks to its longstanding Pachinko parlors and Mahjong dens. Home consoles were seen mostly as American curiosities, despite the country having their own consoles that more felt like 4-bit consoles graphics-wise like the Epoch Cassette Vision and the Gakken TV Boy. Although the Crash provided Japan the perfect storm for domestic development of computer technology (because the parts were so cheap now all of a sudden), much of that investment went into gaming computers like the MSX, which was released in late 1983. Although Japan couldn't hang with the PC competition in Europe and North America for very long, it also parlayed some success into the domestic home console, Nintendo's Famicom. That one came out right as the Crash was beginning in North America, so it looked a lot like Japan was a latecomer who was about to lose their bet that home consoles would ever be a "thing". We'll see how that bet worked out in a little bit.

That's not to say the Crash didn't have any impact on Japan. In the early 80's, Sega and Coleco had a mutually-beneficial business relationship, resulting in near-arcade-perfect ports of big Sega games like Zaxxon for the ColecoVision, and Sega shoring up the Japanese distribution rights to the ColecoVision itself. Coleco's downfall during the Crash resulted in Sega going it alone for their first console, the SG-1000, which was effectively a ColecoVision clone with near-identical specs.

Meanwhile, Latin America was a little weird. They were long used to being the dumping ground for crappy American products, so it was no surprise that American video game companies tried dumping their glut of crappy games on Latin America, particularly Mexico. Like the U.S., Latin America had no Internet, gaming magazines, or any other way of telling that a given game was crap. Unlike the U.S., they were happy to play what the Americans considered crap - but only if they could afford it. Since most Latin Americans couldn't afford a personal computer, the gaming PC was a non-factor there. And the only home consoles that caught on down there were the overproduced ones in America - the Atari 2600, Colecovision, and Intellivision. But those were sufficiently popular that not only was there never a "crash", but the NES couldn't even dethrone the older consoles until the late 1980snote . Atari games and consoles were being sold in Latin America until the 2000s, when the hardware started falling apart, and even today you can get them second-hand.

How Nintendo revived the American console market

The Crash killed the American home console market for two years. Video game sales dropped from $3 billion in 1982 ($8.37B in 2021) to as low as $100 million in 1985 ($250M in 2021). That's a dropoff of 97%, - which caused a majority of game companies to go out of business. By 1985, nobody was making home consoles anymore in America.

Enter further proof to the 1980s people that Japan was about to take over the world.

In 1983, as Atari was busy trying to figure out how to save its video game business, it looked across the Pacific and noticed a company named Nintendo that was doing phenomenally well. They reached out to Nintendo's fledgling North American branch, Nintendo of America, and struck a deal for the home computer rights to Nintendo's mega-hit, Donkey Kong. Coleco already owned the rights to the console version, but Atari could put it on their Atari 800 PC. Nintendo of Japan was so impressed with how well the deal had gone that their president told NOA to offer Atari the worldwide rights to the Famicom. Atari and Nintendo hammered out a deal where Atari would manufacture and sell the machines, Nintendo would provide software support and receive a large royalty on every machine sold, and Nintendo would port popular Atari games to the Famicom.

The two companies agreed to finalize the deal at that summer's Consumer Electronics Show. But when they got there, both parties were very surprised to see a version of Donkey Kong proudly playing on Coleco's Adam computer. What happened next left everyone ashamed: Atari thought Nintendo had double-crossed them and asked what the hell was going on, but Nintendo didn't know. Nintendo in turn met with Coleco at CES, and Nintendo's Japanese president, Hiroshi Yamauchi -who was normally a very calm and stoic man-completely lost his cool and verbally blasted the Coleco delegation on the show floor. Coleco's excuse was that the game was only a technical test, and that the Adam used cartridges and so was technically a game console and not a PC. Nintendo didn't buy it and threatened legal action against Coleco if they sold a Donkey Kong game on the Adam, which got Coleco to back down.

All this wound up dramatically slowing down the Famicom distribution deal, because Atari, Coleco, and Nintendo spent the rest of 1983 hashing out the Donkey Kong rights. And as the Great Crash caught up with Atari, the deal fell through. Nintendo was devastated, but it worked out for them in a number of ways. First, Nintendo had already done the ground work for porting Atari games to the Famicom, which put them in touch with HAL Laboratory and Satoru Iwata, who would become key players in Nintendo's future. Second, they would later learn from a former Warner attorney that Atari never had the money to make the deal in the first place — they just wanted to size up a potential business rival, tie them up in negotiations, and learn about the Famicom's hardware so they could make their own competitor. Indeed, Atari basically saw Nintendo and the Famicom as a way out of the ongoing Crash, and Nintendo lucked out of having to deal with them. And third, Nintendo had gotten a long, hard look at the Famicom's potential in the US market, and they realized they didn't need Atari — they could do it on their own.

In 1984, Nintendo took advantage of the healthier marketplace for arcade games in the United States by creating the Nintendo Vs. System, which contained versions of popular Famicom games, modified for arcade play. These cabinets would find a very receptive audience in America, becoming some of the most popular arcade games of the era. This all gave Nintendo positive proof that their plans to bring their 8-bit games and hardware stateside might just be Crazy Enough to Work. Nintendo took note of the most popular Vs. System cabinets, Duck Hunt in particular, to determine which games would make the most enticing launch titles for their localized Famicom.

In 1985, Nintendo of America released the American version of the Famicom, the Nintendo Entertainment System, or NES. With an initial budget of $50 million, it was given a limited release in New York City for Christmas 1985. By then, the Crash had long decimated the American console market, and Nintendo had chosen to introduce the NES in one of the country's most difficult consumer markets. How was Nintendo going to make inroads in a market that was so skeptical of console gaming? Simple: by doing their homework. Before a single console touched American shores, Nintendo of America studied the crash, identified the causes, and created a plan to avoid them.

First, it had to solve the Shovelware problem. It did so with its own proprietary cartridge design. Unlike the Atari 2600, Nintendo kept it totally secret; nobody knew how to make a Nintendo cartridge but Nintendo. Part of the secret was the "10NES" lockout chip — if a cartridge didn't include it, the game refused to run it.note Nintendo also enforced actual quality control, both in terms of playability and of content. This meant no more buggy games, and no more pornographic gamesnote . It tied all this together with the "Nintendo Seal of Quality", which was a mark on the cartridge that proved that Nintendo had reviewed and approved the game to ensure that it was almost entirely bug free and wouldn't brick the console playing it. Anything else was considered pirated and "play at your own risk", and retailers would now know which games were the real deal and which were Shovelwarenote .

Second, it disguised the system to look less like a toy and more like a sophisticated piece of electronics equipment. Unlike the Japanese Famicom, and unlike the American consoles before it, the American NES would be a front-loading cartridge system, making it look much more like a VCR than a game console. It also bundled the system with the Robotic Operating Buddy and Zapper Light Gun peripherals, which looked much more like conventional toys. The former only worked with two games, and the latter didn't fare much better in the long run, but they both looked cool for the time, and that was more important. Nintendo was hoping to convince toy stores to carry the console even after having been burned by the crash.

Their solution to this was the definition of risky. Nintendo would offer the stores a deal: The first round of product would be free to the stores. Nintendo would come in, set up the product, and at the end of the trial period, the stores would only have to pay for sold product. Anything that wasn't sold, Nintendo would take back for free. Because manufacturers get their income from the stores ordering the product, and not direct customer purchases, this put all the risk on Nintendo, and would be business suicide if it didn't work. Nintendo chose as its test market a number of toy stores, including the flagship FAO Schwartz store, in New York City because, as the saying goes, "if you can make it in New York, you can make it anywhere."

Third, Nintendo had its Killer App — the original Super Mario Bros.. It was solid, it was extensive, it was fun, and it was unlike anything anybody had played before. It combined the ease of the home console with the computing power and disk space of a PC game. It wasn't even bundled with the console originally (in either Japan or North Americanote ), but word of mouth soon came out, and it was this game that drove sales of the NES to the stratosphere.

The combined strategy worked, and after what turned out to be a very successful test run in New York (FAO Schwartz reportedly placed three restock orders in the first month), Nintendo went nationwide.

Nintendo's crazy idea to revive a moribund console market with a game involving a fat Italian plumber venturing across a land overrun by turtles and walking mushrooms to save a princess from a fire-breathing dragon-turtle proved to be Crazy Enough to Work. And in so doing, it ushered in a new era of gaming.

The effects of the Crash and Nintendo managing to revitalize the North American market on the global video game market are still felt to this day; although there were still some American-made consoles in the last years of the 20th century, none of them made much headway for various reasons, and Japan enjoyed a virtual monopoly on the global console market for 16 years, with Nintendo only being knocked off the top of the market by Sony's PlayStation in 1995. Even after Microsoft's Xbox broke that monopoly in 2001, that just meant that two out of the three major players were Japanese. 23 years later, the "Big Three" are still Nintendo, Sony, and Microsoft.